Six MCP Servers, One VS Code Session: Building a Cricket Analytics Pipeline in Microsoft Fabric

The views and opinions expressed in this post are solely my own and do not reflect the opinions, strategy, or official guidance of my employer, Microsoft.

I spent 90 minutes building a data pipeline from raw JSON to Power BI dashboards without opening the Fabric portal. No portal clicks, no copy-pasting connection strings. Just VS Code, GitHub Copilot, and six MCP servers talking to each other.

This is the story of how that happened. Big thanks to Tom Peplow whose post From JSON to GOAT inspired the Fabric foundation for this project and Mimoune Djouallah who persuaded me to stick with duckdb.

[!NOTE] A note on architecture: This post documents how I built a working pipeline in 90 minutes, not how you should design one for production. I build data tools but I'm not a seasoned data engineer. The schema is flat, the ETL is single-run, and there's no error handling to speak of. If you're building something that matters, evaluate the tradeoffs yourself.

The starting point

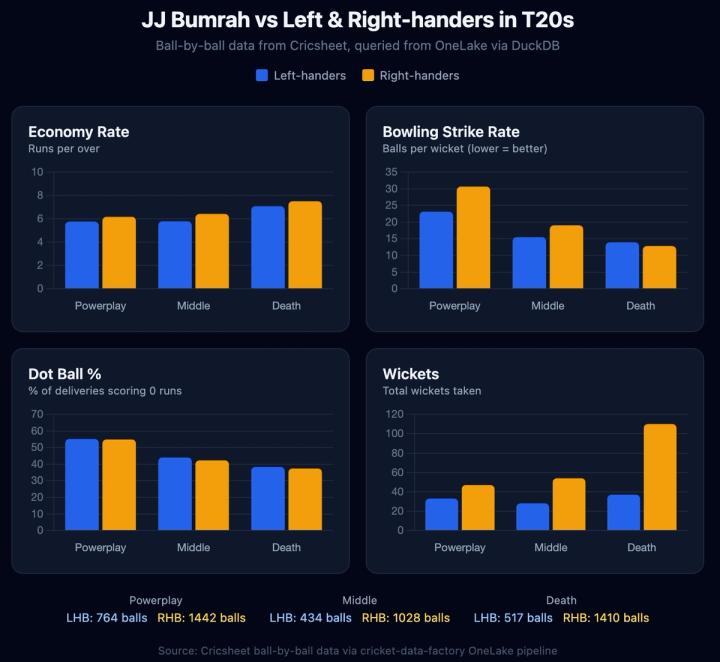

In my previous post, I talked about how I built cricket-mcp, an MCP server that gives AI agents access to 10.9 million ball-by-ball cricket deliveries from Cricsheet. It runs on DuckDB with 26 analytical tools: matchups, phase analysis, career stats, tournament leaderboards. Ask "how does Kohli do against left-arm pace in death overs while chasing?" and you get an answer in seconds.

But it was local-only. One person, one machine, one DuckDB file. I wanted it in Fabric: shared dashboards, governed access, scheduled refreshes, team collaboration. The "enterprise" version.

The question was: how much would I have to rewrite?

The architecture decision that made it work

I looked at five architecture options. The obvious one (rewrite all 26 cricket-mcp tools in T-SQL for Fabric's SQL endpoint) was an immediate no. DuckDB SQL and T-SQL are different languages in practice. QUALIFY, FILTER(WHERE), list aggregation, none of that works on a Lakehouse SQL endpoint.

The insight: DuckDB has a delta extension. OneLake exposes Delta tables over ADLS Gen2. Which means DuckDB can read directly from OneLake.

Cricsheet JSON → PySpark Notebook → Lakehouse (Delta tables in OneLake)

↓

┌─────────────┴─────────────┐

↓ ↓

Power BI cricket-mcp

(DirectLake + DAX) (DuckDB reads Delta from OneLake)

One copy of data, two consumers, zero rewrites. Power BI gets DirectLake (the fastest mode), cricket-mcp keeps all 26 tools unchanged, and both read from the same physical Delta files in OneLake.

Six servers, one session

Here's what sat in my .vscode/mcp.json by the end of the day:

| Server | What it did |

|---|---|

| Fabric MCP Server | Created the lakehouse, uploaded files, inspected table schemas |

| Fabric Analytics MCP | Managed notebook lifecycle, Livy Spark sessions |

| DataFactory MCP | Built dataflows for player enrichment, created pipelines |

| Power BI Modeling MCP | Authored the semantic model: tables, measures, relationships |

| Power BI MCP | Ran DAX queries to validate the model |

| cricket-mcp | The domain-specific analytics, all 26 tools now reading from OneLake |

Every one of these ran as a local MCP server process. Copilot could invoke any of them in a single conversation turn. "Create a lakehouse called CricketLakehouse" → Fabric MCP. "Now build a dataflow that fetches this CSV" → DataFactory MCP. "What's Kohli's record against Hazlewood in T20s?" → cricket-mcp. All in one conversation.

Why six servers (including the cricket one)?

Fair question. Fabric is one product. Why is it five MCP servers?

Fabric covers a lot of ground: storage, data engineering, data integration, analytics. Each workload has its own developer language: M code for dataflows, PySpark for notebooks, DAX for semantic models, TMDL for model definitions. That's a lot of surface area.

The reason there are six is simple: MCP is an open protocol, and different teams build servers for what they know best. The Fabric MCP Server handles OneLake operations. DataFactory MCP handles M code and pipelines. Power BI Modeling MCP handles measures and relationships. Each has a focused set of tools, which actually works well for AI agents. Smaller tool surfaces mean less ambiguity when the LLM is picking which tool to call.

The real benefit shows up in practice: you pick the servers you need for your workflow. Doing ETL? Fabric MCP + DataFactory MCP. Building dashboards? Power BI MCP + Power BI Modeling MCP. Full pipeline like this project? All six. It's composable, and that composability is what made it possible to build the whole thing from one VS Code session.

The build, step by step

Creating infrastructure by talking to it

The first thing I did was ask to create a lakehouse. The Fabric MCP Server's onelake item create tool handled it. Then onelake directory create for the staging directory. Then onelake upload file to push the notebook.

No portal. No clicking through "New → Lakehouse → name it → create". Just a prompt.

Writing 10.9 million rows with a PySpark notebook

The ETL notebook downloads a 94MB ZIP from Cricsheet containing 21,000+ JSON files, every recorded cricket match, ball by ball. It parses them into four tables:

| Table | Rows | What |

|---|---|---|

players |

14K | Player registry |

matches |

21K | Match metadata |

innings |

50K | Innings-level data |

deliveries |

10.9M | Every single ball |

The hard part: the schema had to match cricket-mcp's DuckDB schema exactly. I didn't want to fork the MCP server. It's built on open-source tooling and I wanted the same code to work against both the local DuckDB file and the Fabric lakehouse. That meant same table names, same column names, same types. One wrong column name (date instead of date_start, over instead of over_number) and a tool breaks silently. I put a column name cheat sheet in the project's skill file so the agent wouldn't drift.

The Livy detour

I initially tried the Fabric Jobs API to run the notebook. It returned "Job instance failed without detail error." No stack trace. No output. Nothing.

So I pivoted to the Livy API, Fabric's interactive Spark session interface. You create a session, submit code cells one at a time, and poll each for output. When the deliveries cell took 8 minutes, I could see progress. When something failed, I got the actual Python traceback.

The agent generated a small script (run_livy.py) that reads the .py notebook, splits it into cells, and submits them individually:

python3 scripts/run_livy.py imports

python3 scripts/run_livy.py download # 94MB ZIP

python3 scripts/run_livy.py parse # 21K JSON files

python3 scripts/run_livy.py deliveries # 10.9M rows

python3 scripts/run_livy.py optimize # OPTIMIZE with V-Order

This turned out to be one of the most useful patterns from the whole project. The Jobs API is fire-and-forget. Livy gives you a debugger.

Player enrichment: the DataFactory MCP detour

Cricsheet gives match data but not player profiles. For that, I needed ESPNCricinfo data: batting style, bowling style, playing role. The cricketdata R package publishes a CSV with 16,000 players.

DataFactory MCP created a Dataflow Gen2 that fetches the CSV via Web.Contents, loads it as Csv.Document, and writes to a player_enrichment table in the lakehouse.

This took four attempts. The first dataflow failed because I used the wrong CSV column names (espn_id when it's actually cricinfo_id). The second failed because multi-source dataflows need AllowCombine enabled. The third failed because you can't toggle AllowCombine on an existing dataflow, so you have to create a fresh one. The fourth worked.

Every one of these failures was diagnosed and fixed in the same chat, using the same MCP servers, without touching a browser.

The semantic model: Power BI meets cricket logic

Cricket analytics has specific rules that break naive calculations:

- Batting average is runs per dismissal, not runs per innings

- Balls faced excludes wides (the batter didn't face them)

- Bowling runs exclude byes and legbyes (not the bowler's fault)

- Bowling wickets exclude run outs (not the bowler's skill)

These became DAX measures in a DirectLake semantic model. The Power BI Modeling MCP server authored the model and deployed it via the REST API:

Batting Average = DIVIDE([Batter Runs], [Dismissals], 0)

Balls Faced = COUNTROWS(FILTER(deliveries, deliveries[extras_wides] = 0))

Bowler Runs = SUMX(deliveries, deliveries[runs_total] - deliveries[extras_byes] - deliveries[extras_legbyes])

The Power BI semantic model IS the star schema. No physical dimension tables. Relationships and DAX measures are defined on top of the same four flat tables that cricket-mcp queries directly. One schema, two layers of analytics.

Connecting cricket-mcp to OneLake

Last step: making cricket-mcp read from OneLake instead of a local DuckDB file. Copilot generated src/backends/onelake.ts:

// Load DuckDB extensions

await conn.run("INSTALL delta; LOAD delta;");

await conn.run("INSTALL azure; LOAD azure;");

// Azure CLI auth

await conn.run(`CREATE SECRET (TYPE AZURE, PROVIDER CREDENTIAL_CHAIN, CHAIN 'cli')`);

// Create views over OneLake Delta tables

for (const table of ["players", "matches", "innings", "deliveries"]) {

await conn.run(`CREATE VIEW ${table} AS SELECT * FROM delta_scan('${deltaPath}/${table}')`);

}

DuckDB's delta extension reads Delta Lake natively. The azure extension handles Entra ID auth. Each table becomes a view that points at the OneLake path. All 26 tools work unchanged. Same SQL, same tables, different storage.

# Before: local DuckDB

npx tsx src/index.ts serve

# After: OneLake

npx tsx src/index.ts serve --backend onelake \

--workspace-id <guid> --lakehouse-id <guid>

What I learned

MCP servers compose. Copilot picks which server to call based on what you're asking, and it works well across all six. Infrastructure goes to Fabric MCP, data ingestion to DataFactory MCP, analytics to cricket-mcp.

The Livy API is your friend. The Fabric Jobs API is for production scheduling. For development, especially when you're iterating on a notebook that writes 10.9 million rows, Livy's cell-by-cell execution with real output is essential.

Schema compatibility is fragile. I spent more time on column name mismatches than on actual logic. over vs over_number. date vs date_start. super_over vs is_super_over. A type system that checked these at compile time would have saved hours. Instead, I wrote a cheat sheet and encoded it as agent context.

DirectLake + DuckDB work well together. Power BI gets the fastest visualization mode, DuckDB gets millisecond analytics, and they share the same data with no sync needed. Keeping both engines on the same Delta files was the best decision in the whole project.

The result

The cricket-data-factory repo on GitHub. Everything to go from raw Cricsheet JSON to Power BI dashboards and 26 MCP tools, all orchestrated from VS Code.

┌─────────────────────────────────────────────────────────────────────────────────┐

│ VS Code + Copilot │

│ │

│ Fabric MCP │ Fabric Analytics │ DataFactory │ PBI Modeling │ PBI │ cricket-mcp │

│ │ MCP │ MCP │ MCP │ MCP │ │

└──────┬──────┴────────┬─────────┴──────┬──────┴──────┬───────┴──┬──┴──────┬──────┘

▼ ▼ ▼ ▼ ▼ ▼

┌─────────────────────────────────────────────────────────────────────────────────┐

│ OneLake (CricketLakehouse) │

│ players │ matches │ innings │ deliveries │ player_enrichment │

└─────────────────────────────────────────────────────────────────────────────────┘

No portal was opened in the making of this pipeline.

Inspired by From JSON to GOAT by Tom Peplow. Built with cricket-mcp, Fabric MCP Server, DataFactory MCP, Fabric Analytics MCP, Power BI MCP, and Power BI Modeling MCP.